Free

Download the package from the manager of your site.

How to download?

How to download?

Warning! This package requires MODX not less than

2.3

!

RobotsBuilder is a separate section in the admin area where you can create robots.txt and sitemap.xml virtual files.

Now they will not hang in the resource tree and confuse content managers. At the same time, the file code will be available for editing at any time.

The extra allows you to create different robots.txt and sitemap.xml for different contexts — in case you have a multi-site system implemented in the admin area.

When you open a page with the robots.txt or sitemap.xml address, the extra intercepts the request and displays the code entered in the corresponding field, regardless of whether there is a resource with the same URL or not.

Technical documentation

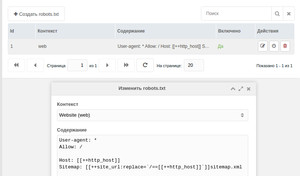

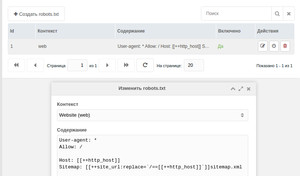

To create a robots.txt or sitemap.xml, you need to go to the component page in the manager.

When creating, specify the context to which this particular file and its contents belong:

Options

No options available.

F. A. Q.

What should I do if my robots.txt code does not appear or change?

Check that in the root of the site you do not have a robots.txt file — otherwise the web server will give it to the user on its own — without the participation of MODX.

What if the sitemap.xml opens with an XML parsing error?

Try to change the snippet call in the file content to Uncached (with the exclamation mark).

Now they will not hang in the resource tree and confuse content managers. At the same time, the file code will be available for editing at any time.

The extra allows you to create different robots.txt and sitemap.xml for different contexts — in case you have a multi-site system implemented in the admin area.

When you open a page with the robots.txt or sitemap.xml address, the extra intercepts the request and displays the code entered in the corresponding field, regardless of whether there is a resource with the same URL or not.

Technical documentation

To create a robots.txt or sitemap.xml, you need to go to the component page in the manager.

When creating, specify the context to which this particular file and its contents belong:

Options

No options available.

F. A. Q.

What should I do if my robots.txt code does not appear or change?

Check that in the root of the site you do not have a robots.txt file — otherwise the web server will give it to the user on its own — without the participation of MODX.

What if the sitemap.xml opens with an XML parsing error?

Try to change the snippet call in the file content to Uncached (with the exclamation mark).

1.0.3-beta

- Added creating sitemap.xml

1.0.2-beta

- Fix description in RU lexicon

1.0.1-beta

- Multicontext managing

1.0.0-beta

- First release